Design Architecture, Geoffrey Hinton, Networks, GLOM, Vendors, Visual Opinion, Personal Ideas, Intelligence

[ad_1]

The in-depth study led to a new transformation of AI, and changed the vision of computers and the whole sector. Hinton believes in-depth learning should be almost all that is required imitating complete wisdom.

But despite the rapid progress, there are still major challenges. Introduce the neural network to an unfamiliar or unfamiliar area, and it reveals itself to be light and flexible. Self-driving cars and typewriters are fun, but things can get messy. AI viewing machines can be easily manipulated: a side cup of coffee would not be obscure from the top if the machine was not trained in that concept; and based on a few pixels, the panda could make the mistake of being an ostrich, or even a school bus.

GLOM addresses two of the most complex aspects of visualization: understanding all forms of matter and their natural components; and awareness when viewed from a new perspective. (The focus of GLOM lies in observation, but Hinton hopes the idea can be applied to language.)

Something like Hinton’s face, for example, is shaped by his eyes like tired eyes and dogs (many people ask questions, sleep a little), his mouth and ears, and a prominent nose, all made of non-white-gray. And when given a nose, it is easily recognizable even at first glance.

These two things – the public relations and his views, are, from Hinton, the most important part of the public perception. “If GLOM works,” he says, “it will create awareness in a much more human-like way than modern networks.”

Dividing parts into wholes, however, can be a problem for computers, because sometimes their components are confusing. The circle can be an eye, or a donut, or a wheel. As Hinton points out, the first generation of AI visions attempted to identify objects by relying heavily on the geometry of the relationship-full-to-between-interconnected and interconnected parts and all. The second generation instead relied heavily on in-depth learning — allowing the neural network train to expand. With GLOM, Hinton combines the best of both worlds.

Gary Marcus, co-founder and CEO of Robust, said: “There’s a little bit of modesty that I love about this.” AI is a well-known antagonist who relies heavily on intensive learning. Marcus envies Hinton’s willingness to challenge something that made him famous, admitting it doesn’t really work. “He’s brave,” he says. “And it’s very important to say, ‘I’ll try to think outside the box.’”

GLOM architecture

Mu making GLOM, Hinton tried to imitate some of the shortcuts that people use to understand the world. “GLOM, as well as Geoff’s multidisciplinary work, is about looking at people, looking at the nerve networks they can also have, and showing that the networks are good at seeing,” said Nick Frosst, a computer scientist at the Toronto-based language at on Google Brain.

With visual perception, one way is to explore aspects of an object, such as the various eye shapes, in order to understand everything. If you see a certain nose, you can identify it as part of Hinton’s face; and the whole control part. To build it better, Hinton says, “I have the necessary knowledge to use all the other components.” The human brain comprehends a whole part and forms what is called a “parse tree” – a branch image that shows the connection between all, its organs and parts. The face is above the tree, and the part, the eyes, the nose, the ears, and the mouth form the branches below.

One of the main goals of Hinton and GLOM is to capture the parse value in the neural network – this can be distinguished from the existing nervous networks. For technical reasons, it is difficult to do. “It’s difficult because each image is matched by a person into a unique value, then we also need a neural network to do the same,” says Frosst. “It’s hard for someone to find something with a layered house – a hidden network – to have a new image for each new image.” Hinton has made several attempts. GLOM is also a major highlight of its first trial of 2017, combined with other advances in the field.

“I have a runny nose!”

GLOM vector

MS TECH | EVIATAR BACH VIA WIKIMEDIA

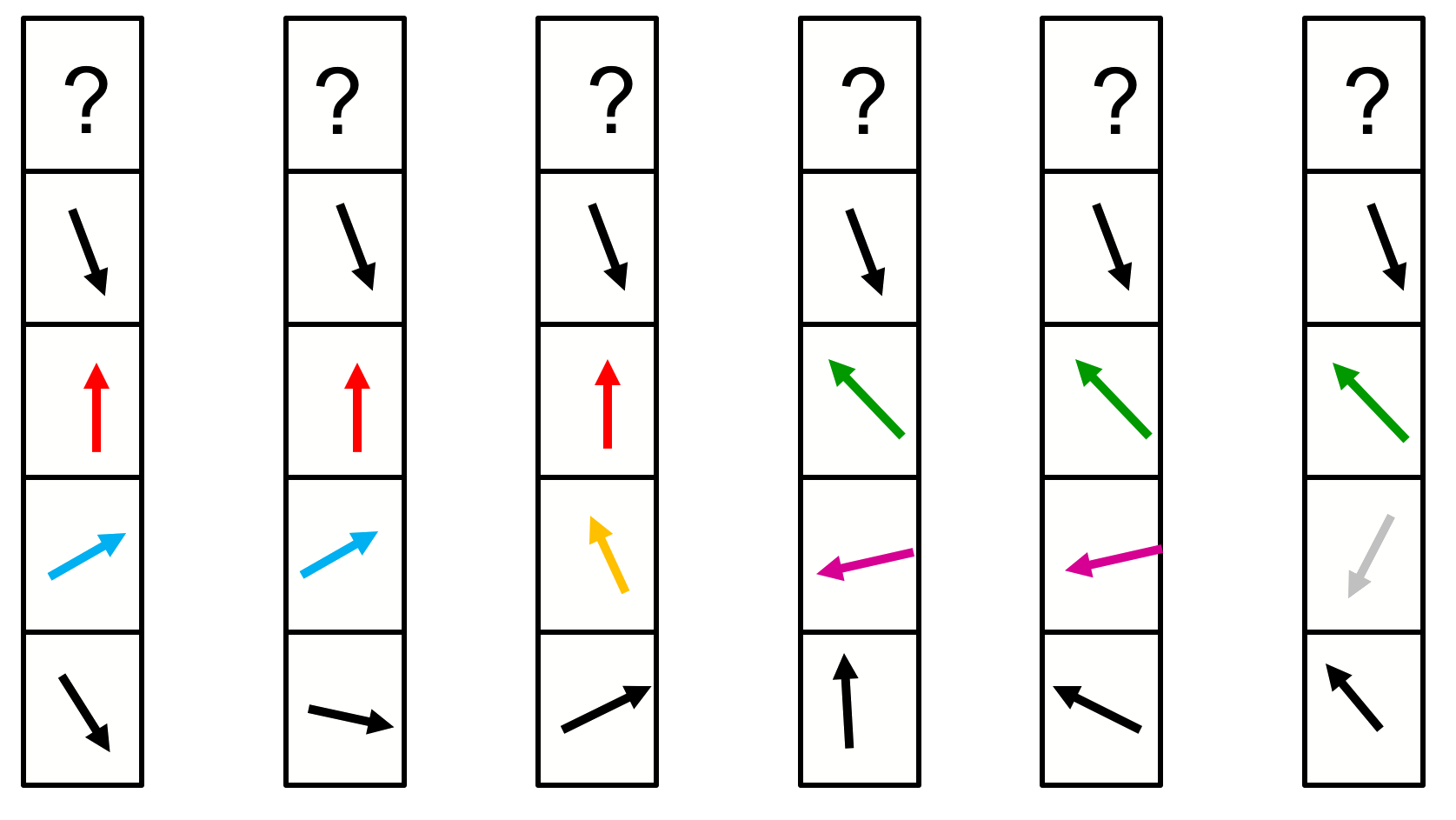

Here’s a look at the GLOM design: Here’s an interesting picture (say, a picture of Hinton’s face) divided into a grid. Each area has a “spot” in the image – some areas have the nose of the eye, while the other has the tip of the nose. In each net there are five sections, or levels. And measure by measure, the system predicts, with a vector representing what is or has not been said. When they get closer to the bottom, a vet representing the nasal passages can say, “I’m a nose!” And on the next level, by building a coherent picture of what is being seen, the vector can predict: “I’m part of the face looking sideways!”

But the question is, do neighboring vectors of the same level agree? In unison, the vectors point in the same direction, saying the same thing: “Yes, we are all one male.” Or increase the price of parse. “Yes, we are all of the same race.”

Seeking consensus about the object – what the object is, in the end – different VOMOM vectors, spaces and layouts, almost all of the neighboring vectors, as well as the projected vectors from the upper and lower layers.

However, the nets “don’t like” without anything close, Hinton said. They are chosen differently, with a close prediction that shows similarities. “This is a well-known fact in America, this is called the echo room,” he says. “What you do is you just accept ideas from people who already agree with you; and then what happens is that you find a common room where the whole community has the same ideas. GLOM uses this in a profitable way.” The most amazing thing about Hinton’s system is “islands of understanding”.

“Geoff is a strange thinker …”

Sue Becker

“Just imagine a group of people in a room, and shout the diversity of the same idea,” says Frosst – or imagine those people if the vectors show a little in the same direction. “After a while, they connect on the same mindset, and they all feel strong, because they have been confirmed by other people around them.” This is how GLOM vectors promote and expand their image claims.

GLOM uses these islands to come together to fulfill their deception of representing the parse tree in the neural network. While some recent networks use links between vectors a opening, GLOM uses the agreement of type-Build demonstrations of objects in the web. For example, while a number of vectors admit that they all represent the nasal portion, their small affiliate group represents the nose in the web at the front. A small group of affirmative vectors may represent the mouth of the parse tree; and a large cluster at the top of the tree represented the clear idea that the whole picture was Hinton’s face. “The way the parse tree is represented here,” Hinton explains, “is that to a degree it is a large island; parts of the object are small islands; very small parts, and so on.”

GEOFFREY HINTON

According to Hinton’s former colleague and co-founder Joshua Bengio, a computer scientist at University of Montreal, if GLOM were able to tackle the technical problem of representing the parse price in the nerve network, it would be an important factor – it is important to make the neural networks work properly. “Geoff has made incredible ideas many times in his career, many of which he has proven to be accurate,” says Bengio. “As a result, I listen to them, especially when they love them as much as they do with GLOM.”

The courage of Hinton’s conviction is based not only on the dining room metaphors, but also on mathematical and biological parallels that inspired and confirmed some of the choices in GLOM technology.

Sue Becker, a former student of Hinton’s, who is now a psychologist at McMaster University, said: “Geoff is a very strange thinker because he can apply complex mathematical concepts and combine them with constraints to form concepts.” “Researchers who specialize in mathematics or neuroscience cannot completely dismiss the idea that machines and humans can learn and think.”

Turning wisdom into technology

In the meantime, Hinton’s new concept has been well received, especially in the world’s largest halls. “On Twitter, I really like it,” she says. And a YouTube training “MeGLOMania.”

Hinton is the first to admit that at the moment GLOM is also a myth (she spent a year as an unstable joker before switching to experimental psychology). “If the idea sounds good in philosophy, it’s good,” he says. “How can you come up with something out of the ordinary? That would not be a lie. “Science, by contrast, is” full of things that sound like garbage “but works very well – for example, nerve networks, he says.

The GLOM was designed to make sense. But can it work?

[ad_2]

Source link